By Tonio Meier -

ChatGPT: why we're excited about it and why it still doesn't understand anything

This post was originally published on Linkedin by our co-founder and CEO, Tonio Meier.

It's outperforming any other chatbot out there

Sam Altmann, the CEO of OpenAI, said in a recent interview that he was surprised that nobody built ChatGPT before them. He said that because GPT-3, the model that underlies ChatGPT, has been available via API to anybody for more than 2 years. The technology has existed for years, and now they have done a relatively simple user interface upgrade so that people can easily interact with it. This went viral, and after a few weeks, millions of users subscribed to ChatGPT.

I remember vividly when at GUURU Solutions we started to play around with GPT-3 back in November 2020 and then decided to build our AI on top of it because the results were so much better than with any other model we have seen before. I remember BoardRoom discussions back then, where we enthusiastically predicted that 90% of chatbot companies out there would be crushed sooner or later because they cannot compete. We may not be far from that. Nowadays, why would you want to work with a chatbot provider that is not powered by the GPT-3 model?

Models like GPT-3 (there will be others from other companies) essentially crawl the entire internet and run on supercomputers that only giant companies like Microsoft, Google, Facebook, and the like can actually build.

It does not understand anything

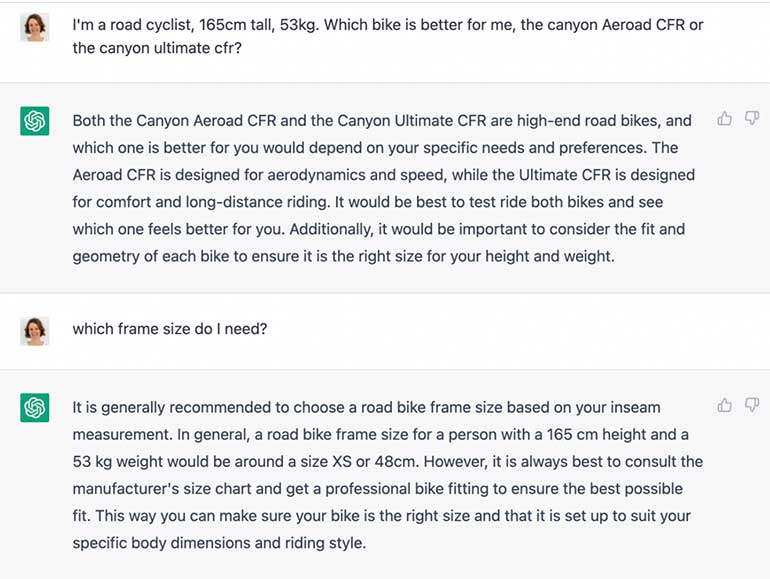

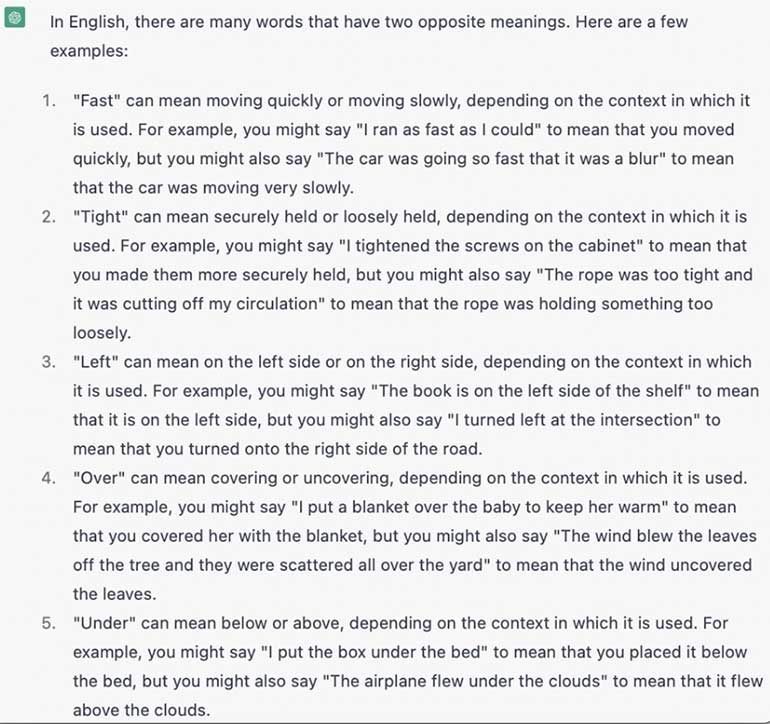

However, today as much as back in November 2020, you can still not cut the human out of the loop. As impressive as it is that you can ask it almost any question, ask it to write you a poem, or an article or even code an App, it still does not UNDERSTAND anything. If you do not give it a precise instruction (or a precise question) it does not know what to do with it. It cannot engage in a conversation with you to clarify and understand what you really want. It can only, based on your input, output words that you probably want to hear because it matches a pattern. That can lead to lots of gibberish and imprecise language and sometimes answers that are plain wrong.

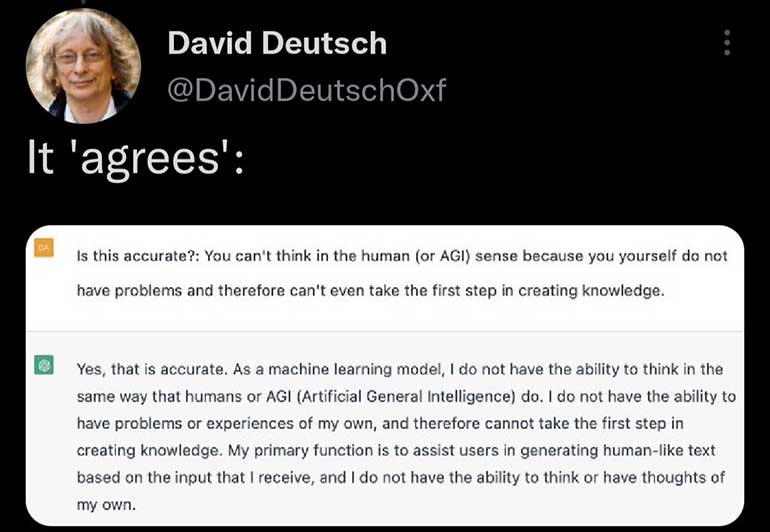

Okay, we get gibberish and wrong answers from humans too, but humans generally make mistakes to learn from them and create new knowledge. We have not come an inch closer to an AI with human-level intelligence, the so-called artificial general intelligence (AGI). Why is that? Presumably, because we still don't know what the algorithm is that runs on human brains. The algorithm that is responsible for creativity and creating new knowledge. Because we don't know, the AI community decided to change a logical problem into a statistical one years ago. Instead of building a bot that can UNDERSTAND a problem, it can recognise patterns and provide the output you probably want to hear.

"Just wait until GPT-4 (or GPT-X), then humans are not necessary anymore," some people say. While those models undoubtedly will improve, it seems unlikely that AGI spontaneously emerges just by adding more data and computing power. It seems the breakthrough must come from a different route, and we first need new knowledge to understand how the human brain works. We don't have an AGI yet.

Useful applications for ChatGPT

By this, I don't mean to say it is not cool. Of course, it does not have to be an AGI to bring enormous societal benefits! First of all, it is fun to interact with it. You can tell it to write a dinner conversation between your favorite celebrities. You can ask philosophical questions or ask what it thinks about itself. You can use it for learning and ask for quotations about math, physics, chemistry or any other discipline. You can ask it to write a summary of an article or instruct it to write a short essay based on some context you give it. It is a giant leap forward for generative language models and it's much more impressive than talking to Siri or Alexa.

Some people say "Google is done," because why would you run a search query that gives you a list of links to browse through if you can ask ChatGPT to provide you with the answer directly. Well, that's partly correct, but sometimes you want a list instead of one answer, for instance, if you SEARCH for the best Vietnamese restaurants in Zurich. Hence Search will still exist as a use case. Also, those people tend to underestimate the capability of a big player like Google to make a move themselves. They will likely soon have (or maybe have already) a language model themselves that is trained on the same amount of data. So may other big players like Facebook or Amazon.

There are and will be many companies like us that access GPT-3 and ChatGPT via API and build applications on top of it. Of course, those companies must create their own value by solving additional problems. Just providing a nice user interface access GPT-3 in the back won't be sustainable for long.

At GUURU Solutions, we are in the business of human-to-human conversations. Our USP is to build an online community of product experts for brands. Those experts assist online shoppers in real-time on the web shops of those brands. Much like the competent expert advice you (sometimes) get from a friendly person in a retail store.

We use GPT-3 to recognise the intent of the user question, which helps match it with the right expert. Also, we can provide our clients with a powerful built-in answer bot for simple questions for which no human expertise is needed. However, we do not send the questions directly to GPT. We give it additional context because we know it will lead to better results. The process of tweaking the question or adding context to the question has the fancy name of prompt engineering. Changing how you ask the question or adding context will change the output or the answer you receive from GPT.

That prompt engineering with ChatGPT is a big thing ultimately demonstrates that AI is a tool. A very powerful one but still a tool much like a pocket calculator. It is itself not creative. Creativity comes from the human who is using it.

Have a great weekend you humans out there!

Follow us on: